Our team of FPGA designers can provide you with the experience and expertise to complete your project on time and on budget. If you are working with AMD (formerly Xilinx) or Intel (formerly Altera) reference designs, need some custom IP designed or just need timing or area optimisation, we are here to give you the help you need.

Block/Platform Designer

If you use Vivado/ISE or Quartus you are probably familiar with Block Designer or Platform Designer. We can use these tools to put together IP blocks into a working system, either based off a reference design or do it from the ground up. Where do these IP blocks come from? We can take your current IP, write our own or leverage the large catalogue of free and paid for IP available from the vendor libraries.

We have worked with a wide range of FPGA products from small Spartan family chips through to large Stratix, Kintex and Virtex devices. We also have experience with programmable SoC (System on Chip) families such as Xilinx's Zynq, where our combined software and hardware skills have allowed us to efficiently create high performance bespoke solutions.

We are confident with both of the major vendors so are also in a perfect place to port from one to the other for you.

Custom IP Design

If you provide a complete specification of what you want, we can go away and create it for you. But we understand that sometimes this is unrealistic. That's why we're happy to run projects in collaboration with you. We'll talk through the design with you and help put together the initial specification. As development goes on, we can suggest improvements or we can change our approach if your circumstances change. We're flexible and experienced at working autonomously, fully integrated and everywhere in between.

An IP block on it's own is not useful though! We will verify the design in simulation or on platform and provide you with the all the synthesis results - either stand-alone or integrated into your system.

Find out more about our IP development.

Resource/Timing Optimisation

We get it, it's annoying when you put all this effort into creating your system and then it doesn't meet timing or it doesn't fit on the FPGA you were targetting. This is something we deal with day in and day out and as a result, we're experts at writing optimal code and optimising your code. Whether you have a high speed design or you want to down-size your FPGA to reduce costs, we can help you.

For this kind of work, we tend do a short investigation of your code base to see what potential savings exist. This allows us to report back to you before you put too much effort in. If you feel there is value in engaging us to do the optimisation, we can get to work on this or you can use the outcome of our investigations to make the changes yourself.

Our Approach to Verification

In order to ensure high-quality designs, test automation is essential. We start from the bottom up with thorough testbenches covering all significant sub-blocks and achieving test coverage that just isn't possible with higher level simulations. Not only does this ensure quality, but having a testbench for each block improves reusability and can greatly reduce time spent debugging.

An accurate software model of the system can also be a huge asset in some cases. Not only does this allow algorithms to be proven and refined before the hardware is developed, but also provides a ready source of test vectors to control and verify the hardware design. Our designers are experienced at creating this style of testbench and, because we also have a software team in-house, we are also able to help develop your software.

Waveform Driven Testbench Generation: create your own testbench with our free online tool

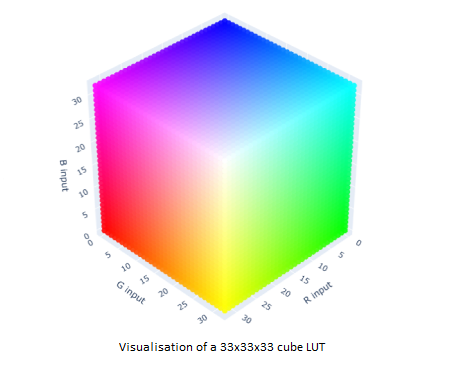

Waveform Driven Testbench Generation

We have created a testbench generator tool for those instances where you have a waveform from e.g. a data sheet and wish to create a simple self-checking testbench. This is particularly useful for those working in functional safety domains where unit tests are needed for each IP, are of a directed test format and a waveform is needed for documenting the design. You can find more details on the testbench generator tool here and create your own testbench through a waveform editor.